Enportal/5.4/admin/enPortal installation/installation/clustering and failover

Architecture

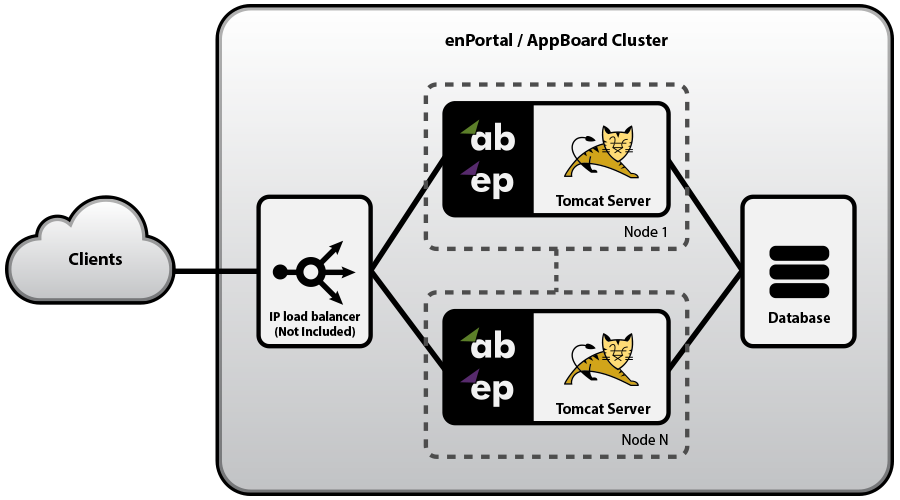

The enPortal/AppBoard system is implemented as a web application architecture. It runs as a web application inside an Apache Tomcat Server, and accesses a JDBC accessible RDBMS or clustered RDBMS. The enPortal/AppBoard web application server scales horizontally by replicating on additional servers/platforms.

Horizontal scaling can be accomplished by adding new enPortal/AppBoard servers to the system in order to grow the capacity of the enPortal/AppBoard cluster. The diagram below shows a load-balanced cluster.

In the above example, assuming n=2, there are three options for configuring the availability of non-primary nodes:

- Load Balanced - Node 1 and Node 2 are fully operational at all times. The Load Balancer directs traffic across the two nodes based on standard load balancing techniques such as round-robin, fewest active sessions, smallest load, etc.

- Failover - All traffic is routed to the AppBoard server on node 1, unless node 1 is detected to be down, in which case traffic is automatically re-routed to node 2. Node 1 and Node 2 are powered up and available at all times.

- Cold Standby - All traffic is routed to the AppBoard server on node 1. If there is an outage on node 1, node 2 is brought online and traffic is re-directed.

Load Distribution

The Load Balancer can distribute sessions to one or more enPortal/AppBoard nodes using any standard load balancing algorithm (e.g. Round-Robin, smallest load, fewest sessions, etc.). The only requirement is that the session affinity is maintained such that a single user is always routed to the same enPortal/AppBoard node during the full duration of the session. enPortal/AppBoard manages the session handling for all of the Tomcat processes and integrated applications within the enPortal/AppBoard component of the solution. Since enPortal/AppBoard is managing session state with integrated back-end web aplications, switching between enPortal/AppBoard servers during a session can cause unexpected session time-outs and additional licensing costs.

The two session cookies used by enPortal/AppBoard are JSESSIONID and enPortal_sessionid. When configuring the Load Balancer for session affinity, it is recommended to use enPortal_sessionid to avoid any conflicts with other applications that may also have a JSESSIONID cookie. A test page (check.jsp) is available from Edge for purposes of testing the Load Balancer configuration. You can drop this test page into the /enportal/ directory of each server node in the system. If you then direct the Load Balancer to hit that page directly (e.g. https://<ipaddress>:<port>/enportal/check.jsp), it will return a 200 code if all segments of the enPortal system are running properly (including database access), and will return a 500 code if there is a failure.

Designing your enPortal/AppBoard system

The physical design of your enPortal/AppBoard system requires an examination of each of the following interrelated factors:

- Initial load (users) and expected growth

- Host hardware and operating system selection

- Network layer requirements

- Security

Of all of these factors, the “initial load (users) and expected growth” is typically in the forefront of the customer’s mind when designing an enPortal/AppBoard system. The total number of users having access to and the number of users logged in to an enPortal/AppBoard system at one time are the two user load metrics that they are most concerned with.

The amount of load induced on a system by a single user is certainly not a constant. There are many factors that affect this load including: the network bandwidth between the user’s machine and the enPortal/AppBoard system, the performance of communications between elements in your integrated solution, the activities the user is performing, and the activity level of the user. However, the following guidelines will enable you to proceed with the design of your enPortal/AppBoard system:

- Use the general rule of “100 concurrent active end-users per node” to get an initial assessment of cluster size. System administrators can incur additional overhead, so if a system is going to have a large number of administrators this should also be taken into consideration, especially if they will be making concurrent changes to the system configuration.

- If your system needs to support over 200 concurrent users or if high availability is a key requirement for your system, then design your enPortal/AppBoard system as a cluster from the start (rather than a single

box solution).

- A more accurate assessment of the scalability of your particular enPortal/AppBoard system can be determined by simulating end-user usage against a single enPortal/AppBoard node. A linear extrapolation

of this value can then be used to determine the number of nodes in your cluster. However, because the addition of new nodes to an enPortal/AppBoard cluster is quite simple, it is often better to start with a single box or 2-node cluster to establish a baseline, and grow the cluster as dictated by real world needs.

The following general guidelines should also be followed when designing your enPortal/AppBoard system:

- Memory selection: be sure to allocate enough physical memory to enPortal/AppBoard. A fully loaded enPortal/AppBoard node can require 4 GB or more of RAM

- Database location: for security and availability purposes, the enPortal/AppBoard database is often hosted on its own server. In this case, minimize the latency of network communications between the application server and your database. A simple ping between each portal server and the database server will determine this latency. (Recommendation: <= 20ms latency)

- IP Load Balancer: a load balancer is necessary for clustered enPortal/AppBoard systems. The CITRIX NetScaler Server Appliance is a recommended hardware solution. The critical configuration that is necessary for any IP Load Balancer solution is to establish a client-to-server affinity, in order to make sure that web browser talks to the same enportal server for the length of each user’s session.

The host hardware and operating system selection is often dictated by external influences rather than enPortal/ AppBoard specific benefits. This is not a concern due to enPortal/AppBoard’s platform independence. Support for scalability through clustering of many nodes makes Solaris, Windows, and Linux based solutions all viable for large deployments.

"Network layer requirements” and “Security” are often the same issue. Since enPortal/AppBoard is often used as an intermediary between a public audience and private resources, it needs to safely bridge the security gap between these two entities. The multi-tiered architecture allows an enPortal/AppBoard system to be segmented across multiple firewall-protected networks.

Licensing Requirements of a Multiple Node Solution

The higher availability and greater capacity of a multiple node solution have already been established. However, it should be noted and planned ahead that there may be additional licensing requirements. The following costs related to designing a multiple node solution should be considered before departing from a typical single-node solution:

- Each enPortal/AppBoard server node is a separate portal installation. Any filesystem changes (patches, configuration changes, etc.) that are required to maintain the solution must be performed on each node.

- An enPortal/AppBoard server license must be purchased for each server node.

- The load balancing device (not included with enPortal/AppBoard) must be acquired.

enPortal/Appboard Replication for Clustering

The enPortal/AppBoard system is implemented on top of a Apache Tomcat application container, with its more dynamic configurations stored in a standalone database. It also contains design time file system artifacts that are used to store customizations such as Content Retrieval Service rule sets and configuration information for AppBoard Stacks. Thus, it is important to consider that both the database and the file system artifacts are replicated and managed properly in a clustered system.

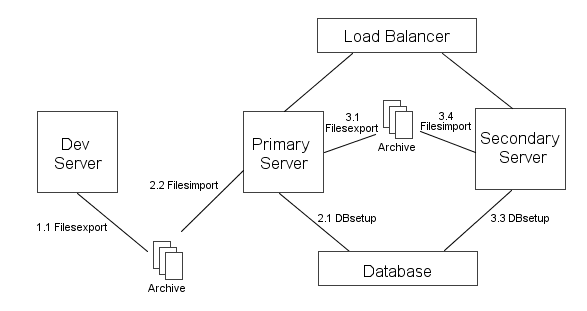

For a clustered system to work properly, it is essential that all of the enPortal/appBoard instances remain in sync with one another. Because the same database is shared, it always remains in sync. In addition, however, the filesystems must also remain in sync. Any changes or customizations made to one instance need to be replicated across the other instance(s). This can be achieved by using the "filesexport" and "filesimport" commands, which are provided to migrate a set of enPortal/AppBoard customizations from one server to another. The filesexport command is configured, by default, to bundle up all of the stock configuration files. However, if you have any custom configuration files, you may need to modify the definitions of the file bundle that is generated by this command.

The configuration steps detailed below assume that an instance of enPortal/AppBoard is already up and running in the development environment. You are now ready to deploy enPortal/AppBoard in the production environment that uses a load balancer and two instances of enPortal/AppBoard (primary and secondary servers) which are connected to the load balancer.

- Preparation work

- In the development instance of enPortal/AppBoard, create an archive from the dev environment by running the filesexport command.

- Transfer the generated archive to the primary server's box.

- Importing the archive to the primary server.

- Configure the primary server's database connectivity using the "portal dbsetup" command in ${TomcatHome}/bin directory.

- In the primary server, import the archive by running the "portal filesimport" command in ${TomcatHome}/bin directory.

- Synchronizing content between the primary and the secondary enPortal/AppBoard instances.

- In the primary server, create an archive by running the filesexport command.

- Transfer the generated archive to the secondary server's box.

- Validate the secondary server's database connectivity using the "portal dbsetup" command in ${TomcatHome}/bin directory.

- In the secondary server, import the archive by running the "portal filesimport" command in ${TomcatHome}/bin directory.

- Modify the database configuration setting to support redundancy

- Edit the file [AppBoardHome]\server\webapps\enportal\WEB-INF\config\hosts.properties

- Locate the line: hosts.redundant=false

- When running in a clustered configuration, change the setting to hosts.redundant=true

Notes:

- To verify that the above process is complete and worked correctly, you must do a full file comparison of the dev, primary, and secondary file systems to confirm that they are mirrored properly. If any custom files from the dev or primary system are missing from the secondary system, then you must modify the configuration of the filesexport command to include the additional customization files that did not get archived. For more information on this, see the filesexport page.

- The database connector replication will occur automatically by the process above, because the database connection settings are stored in the file: persist.properites. Each time an enPortal/AppBoard instance is started, any load files in /server/webapps/enportal/WEB-INF/xmlroot/server/ which do not have the extension ".disabled" will be executed and the default XML content will be loaded into the database. Once the default content has been initially loaded into the database, you can remove the load files from the other instances before starting them, to prevent the XML content from being auto-loaded again into the database when that instance is started. This step would only be necessary if a server node has a new installation which is being started for the first time. The load files to remove are the following:

- load_reloadContent.txt

- load_restore.txt

- load_stock.txt

- Verify that the primary and secondary instances are running against a shared database by logging in to the admin UI, adding a temporary user on each instance, and confirming that the user is shown in the other instances. Note: due to database caching, there may be a delay before changes made to the database from one instance will be directly observed in another instance.

- The default filesexport bundle includes the license.properties file. You typically do not want to copy the license file from the primary server to the secondary server(s), since it will not work on the server which has a different IP address. You can modify the name of the license.properties file to something like license-prod2.properties so that it would not be overwritten. Or you can allow the file to be over-written and then manually reinstate the license.properties file from a backup after executing the filesimport command.

- The default filesexport bundle includes the server.xml file, which includes some of the basic Tomcat settings (such as port number). If you have different settings for server.xml on different servers, you can allow the file to be over-written and then manually reinstate the server.xml file from a backup after executing the filesimport command.

- The default filesexport bundle includes the persist.properties file, which manages the database connection. Procedures for managing this file may vary. Typically this file is the same across all production servers, but some care may be needed when migrating from a dev server to a prod server, which may use different databases and thus have different persist.properties settings.

See Also

CITRIX NetScaler Server Appliance http://www.citrix.com/English/ps2/products/product.asp?contentID=21679&ntref=hp_nav_US