Appboard/2.6/admin/clustering and failover

Overview

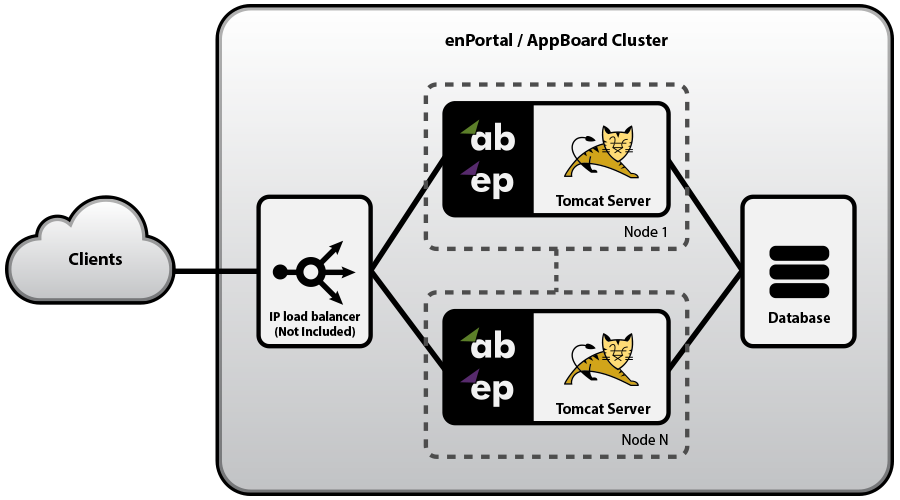

AppBoard is implemented using a highly scalable web application architecture. As a Java application running inside an Apache Tomcat server, AppBoard is able to make use of multi-core and multi-processor systems with large amounts of RAM on 64-bit operating systems. In addition to scaling vertically on a single system, AppBoard supports horizontal scaling to handle even greater loads and/or to provide for high availability environments through the use of a shared configuration database. AppBoard can be used in the following configurations:

- Load Balanced: Two or more nodes are fully operational at all times. The load balancer directs traffic to nodes based on standard load balancing techniques such as round-robin, fewest sessions, smallest load, etc... If a server is detected as down it is removed from the active pool.

- Failover: A two-node configuration with both nodes running but all traffic is routed to the primary node unless it is detected to be down. At this point the load balancer re-directs traffic to the secondary node.

- Cold Standby: A two-node configuration where the secondary node is offline in normal operation. If the primary node is detected to be down the secondary node is brought online and traffic re-directed.

In cases where high-availability is required then regardless of the load a cluster configuration is recommended. In cases where load is a concern refer to the Performance Tuning & Sizing documentation for more information.

Architecture & Licensing

Whether running a load-balanced, failover, or cold-standby configuration the following components are required:

- AppBoard installation per node, this requires a separate license for each node.

- External (shared) configuration database. This database is not provided by Edge and is recommended to reside on a different host to the AppBoard servers. In high availability environments the database itself should also highly available. See the System Requirements for supported external configuration databases.

- Load Balancer. This component is not provided by Edge but is required in cluster configurations.

Cluster Configuration

The overall cluster configuration is made up of separate parts that follow the cluster architecture:

- Load Balancer configuration

- Shared AppBoard configuration: via an external shared configuration database.

- per-node AppBoard configuration and filesystem assets.

Also consider that establishing a new cluster and maintaining the cluster may have different approaches as outlined below.

In simple single-server AppBoard configurations it is recommended to use the built-in, in-process, H2 configuration database. However, in cluster configurations the configuration needs to be shared and kept in sync across two or more nodes so an external configuration database is required. Setting up an external database for redundancy operation has two main steps:

- Configure AppBoard to use an external database (versus the build-in H2). This process is documented in isolation on the Configuration Database page.

- Configure AppBoard to operate in redundancy mode.

Per-Node Configuration & Assets

While the shared configuration database takes care of AppBoard content, enPortal content, and provisioning information, there is other configuration and filesystem assets that need to be maintained per node:

- license file

- configuration database connection details

- all other filesystem assets such as login pages, images for look and feel, images for visualizations, local data source files, enPortal PIM files, and other miscellaneous pieces that have been built into the solution such as custom JSPs, CGIs, HTML/CSS/JS, etc...

The recommended approach, which also serves to ensure full-backups are made of the system, is to configure the Backup export list to include all filesystem assets. Then when establishing, or updating a cluster, the archive can be used to maintain the filesystem components. Also, other custom approaches may also be suitable such as filesystem synchronization tools.

The license and database configuration will need to be handled when first establishing the cluster but will not need to be changed after that point.

Load Balancer

The Load Balancer can distribute sessions to one or more AppBoard nodes using any standard load balancing algorithm (e.g. Round-Robin, smallest load, fewest sessions, etc.). The only requirement is that the session affinity is maintained such that a single user is always routed to the same AppBoard node during the full duration of the session.

The two session cookies used by AppBoard are JSESSIONID and enPortal_sessionid. When configuring the Load Balancer for session affinity, it is recommended to use enPortal_sessionid to avoid any conflicts with other applications that may also have a JSESSIONID cookie.

The following URL can be used by the load balancer as a means of testing AppBoard availability:

http://server:port/enportal/check.jsp

This script returns a HTTP status code 200 (success) if all components of AppBoard are running properly, otherwise it returns a 500 (internal error) if there is an issue. And in the case the AppBoard server isn't running, then of course there will be no response.

Virtualized Environments

Whether running on the bare metal or within virtualized environments the clustering configuration remains the same.

Some virtualization environments may offer their own layer of fault tolerance although this is usually targeted at reducing/eliminating the impact of hardware failure - e.g. VMware Fault Tolerance to transparently failover a guest from a failed physical host to a different physical host such that everything continues un-interrupted. This type of system is useful on it's own but may not be aware of application-level failures that can also occur.

Establishing a Cluster

The following process can be used to establish a cluster environment. Please make sure you have read over the previous sections to understand the overall architecture and configuration components.

The following process assumes an existing environment, although a clean install environment can be used too.

Initial Preparation

- Setup an external database for the shared configuration database, you will need the access details. Do not actually configure AppBoard to use the external database at this stage.

- Set AppBoard to operate in redundancy mode. This is enabled using the following setting in [INSTALL_HOME]/server/webapps/enportal/WEB-INF/config/custom.properties:

- hosts.redundant=true

- Create a full backup archive of your existing system. Ensure this backup has been configured to include all custom filesystem assets. Refer to the Backup & Recovery page for more information. It's recommended this archive also include all required JDBC database drivers, including the JDBC driver required for the external configuration database.

- Have two or more systems ready to be configured for clustering. Note it's possible to also use the existing system without having to re-install.

- Have the AppBoard turnkey distribution and license files for each node.

Setup Process

The following applies to the primary node. Even in a purely load-balanced configuration pick one of the nodes as the primary for purposes of establishing the cluster:

- If converting the existing deployment into a cluster deployment, first shutdown AppBoard.

- Otherwise, deploy the AppBoard turnkey and ensure a valid environment. See the Installation documentation for more info.

- Configure AppBoard to use an external configuration database. Follow the instructions to use dbsetup on the Configuration Database page, but do not restore any archives or perform a dbreset.

- Load the complete backup archive created in the initial preparation:

- portal Apply -jar archive.jar

- Install the license file if not already - remember this is node specific.

- Start AppBoard. At this point you should have a working cluster of one node!

- Verify in the AppBoard catalina.out log file the following lines:

- Establishing a connection to the external configuration database:

- Connecting to the DB_TYPE database at jdbc:mysql://HOST:PORT/DB_NAME...Connected.

- Redundancy mode is enabled:

- date@timestamp Redundancy support enabled

- Also verify you can log into this instance of AppBoard and all content is as expected.

The following applies to all other nodes in the cluster:

- Deploy the AppBoard turnkey and ensure a valid environment. See the Installation documentation for more info.

- Load the complete backup archive created in the initial preparation. Note this is different than for the primary node:

- portal FilesImport -skiplist apply -jar archive.jar

- Ensure database configuration is not loaded on AppBoard startup by renaming the following files by adding .disabled to their filenames:

- [INSTALL_HOME]/server/webapps/enportal/WEB-INF/xmlroot/server/load_*.txt

- [INSTALL_HOME]/server/webapps/enportal/WEB-INF/xmlroot/appboard/load_*.txt

- [INSTALL_HOME]/server/webapps/enportal/WEB-INF/xmlroot/appboard/config/stacks.xml

- Configure AppBoard to use an external configuration database. Follow the instructions to use dbsetup on the Configuration Database page, but do not load any archives or perform a dbreset.

- Install the license file if not already - remember this is node specific.

- Start AppBoard. At this point you should now have a cluster of two or more nodes!

- Verify this instance the same as the primary instance.

Additional verification of the whole cluster can be performed by logging into the separate nodes from different locations and viewing the Session Management system administration page and verifying that all sessions are visible regardless of the node logged into.

Maintaining a Cluster

Product Updates

For full product updates a process similar to establishing a new cluster is required. A full archive should be performed. This should be tested in isolation with the new build to ensure there are no upgrade issues. If changes need to be made due to the upgrade then produce a new full archive on the new version of the product for use when establishing the cluster. As the entire cluster will need to be taken down, and re-established, this process will require some scheduled downtime to complete.

In high-availability environments if scheduled downtime is not possible then another approach would be to take down one node, re-establish a new cluster with a different external configuration database, then failover all clients to the new node. Other nodes can then be re-established pointing to the new configuration database, and then update the load balancer configuration to bring all nods back online.

For patch updates these are usually overlaid onto an existing install and do not modify configuration. This can be done in-place and typically just require the AppBoard server to be restarted.

Content/Configuration Updates

Content and provisioning changes made directly on the cluster are immediately available to all cluster nodes and nothing further needs to be done.

This section covers updates that are made either in a separate development or staging environment that need to be moved to production. For this there are two approaches:

- complete updates made by using full archives. This would essentially require re-establishment of the cluster with the shortcut that AppBoard is already installed, licensed, and the database configuration is in place.

- AppBoard-only content updates. This refers to Stacks, Boards, Widgets, Data Collections, Data Sources, Session Variables, and Theme configuration - which can be exported in isolation of the system configuration and then imported and merged into another system. Refer to the Import/Export Utility documentation. New content can be built on a development system, then exported and merged into production. As this is content configuration only, it only has to be imported onto a single node in the cluster and does not require any downtime.